It’s no secret that I’m not a fan of Target’s new site. We have had quite a bit of attention on that post about Target’s failure of a new site and people from all over the world have read it. There have been a few instances where someone comes to the defense of Target and due to logs and tracking that we use, we have been able to find out that those people were at least partially involved in the project.

Logging Visitors with Loggly.com

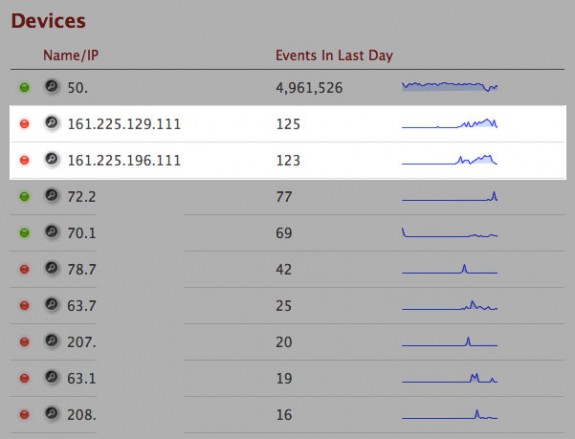

A couple days ago, we saw a large number of visits coming through IP addresses we didn’t recognize. Checking the logs and doing an IP lookup tied allowed us to tie those to Target Corporation in Minnesota. Without having Loggly to do some of the analysis automatically, we may not have noticed. Sure, the data is available through Google Analytics too, but how many people dig down to that level?

How We’ve Tied Loggly into WordPress

There are several ways you can get data into Loggly for analysis. We’re dumping log files in but I’ve also set WordPress up to use their JavaScript logging to dump JSON data in for analysis directly from WordPress. By using this method, I’m able to get quite a bit of data and could really get granular with what is being pushed to Loggly. Currently, I’m grabbing the following data and pushing it to Loggly –

- Detecting the 404 page being displayed

- Parsing keywords from the referrer if they exist

- The URL of the current page

- The full referrer string

- Height and width of the client’s browser window

- Browser/User agent

Right now there isn’t necessarily anything earth shattering as part of that data, but when you start getting into the shell or running searches, you’ll start to see that there is some pretty good data right at your fingertips.

Looking for a list of all the records for what has been a 404? That’s easy to grab with the following search:

search json.404:true

Maybe you want all the records for people who came from Google with Target in the keyword somewhere:

search json.keyword:target AND json.referrer:google

In order to get the data I want from WordPress into Loggly, I created a little function to add the necessary JavaScript to the page and populate the log with a string of JSON formatted data. You should be able to use this function or something similar to do what we’re doing by dropping it into WordPress right before the closing </body> tag

<?php

//Add Loggly Code

function add_loggly(){

$url = "http://" . $_SERVER['SERVER_NAME'] . $_SERVER['REQUEST_URI'];

//check for 404 page - default to false

$status = 'false';

if(is_404()){ $status = 'true'; } //if this is the WP 404 page, set 404 as true

//get the keyword

$kw = '';

$referrer = $_SERVER['HTTP_REFERER'];

//Break the URL into an array

$parsed = parse_url( $referrer, PHP_URL_QUERY );

//Break the query string into an array

parse_str( $parsed, $kw_query );

//google & bing first

if($kw_query['q'] != ''){

$kw = $kw_query['q'];

}

//yahoo uses p - only grab if q didn't exist

if($kw_query['p'] != '' && $kw == ''){

$kw = $kw_query['p'];

}

?>

<script type="text/javascript" src="http://d3eyf2cx8mbems.cloudfront.net/js/loggly-0.1.0.js"></script>

<script type="text/javascript">

window.onload=function(){

var key = "REPLACE WITH YOUR LOGGLY KEY";

var host = (("https:" == document.location.protocol) ? "https://logs.loggly.com" : "http://logs.loggly.com");

castor = new loggly({ url: host+'/inputs/'+key+'?rt=1', level: 'log'});

castor.log('{"url":"<?php echo $url;?>","browser":"' + castor.user_agent + '","height":"' + castor.browser_size.height + '","width":"' + castor.browser_size.width + '","referrer":"<?php echo $referrer; ?>","keyword":"","404":"<?php echo $status; ?>"}');

}

</script>

<?php

}

?>

If you are using Thesis, here is the hook to insert the code:

add_action('thesis_hook_after_html','add_loggly');

If you are using Genesis, here is the hook you would use:

add_action('genesis_after','add_loggly');

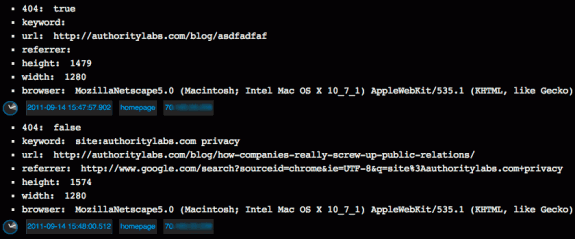

Here’s an example of what that data looks like once it’s dumped into Loggly’s system:

Realtime Traffic Monitoring

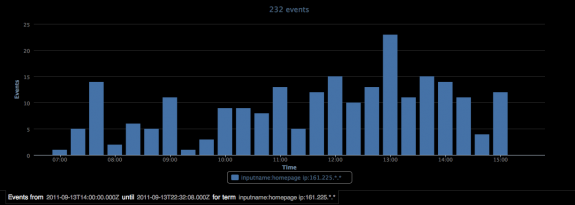

One thing we really like at AuthorityLabs is a good graph. Loggly has some cool graphing built in that lets you visualize the data being added to their system. Taking the data that Target Corporation was nice enough to give us for this test, we were able to graph their activity over the course of a day.

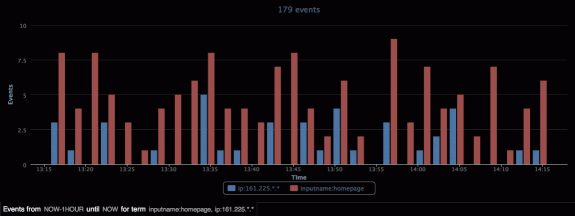

We were also able to graph and compare the number of visits coming from Target with overall visits to the part of our site running WordPress.

Life Lessons

The possibilities for logging, storing, and graphing data with Loggly seem to be endless. This initial test was pretty much focused around Target once we noticed the traffic coming from their headquarters. I doubt they were here to do anything malicious. Hopefully someone over there learned a few things and got some good insights from my earlier post about their new site.

This will likely be the first in a series of posts about how we’re using Loggly and the cool insights that we’re able to gather as a result. Keep checking back and we promise to fill your head with some ideas.

Last, but not least, if you’re going to spy on us, don’t leave your webcam on and allow us to get some pics of your work space. Apparently one of the guys over at Target’s headquarters in Minnesota left his on and this is what we caught –